this post was submitted on 10 Mar 2024

269 points (92.7% liked)

Climate - truthful information about climate, related activism and politics.

5194 readers

1066 users here now

Discussion of climate, how it is changing, activism around that, the politics, and the energy systems change we need in order to stabilize things.

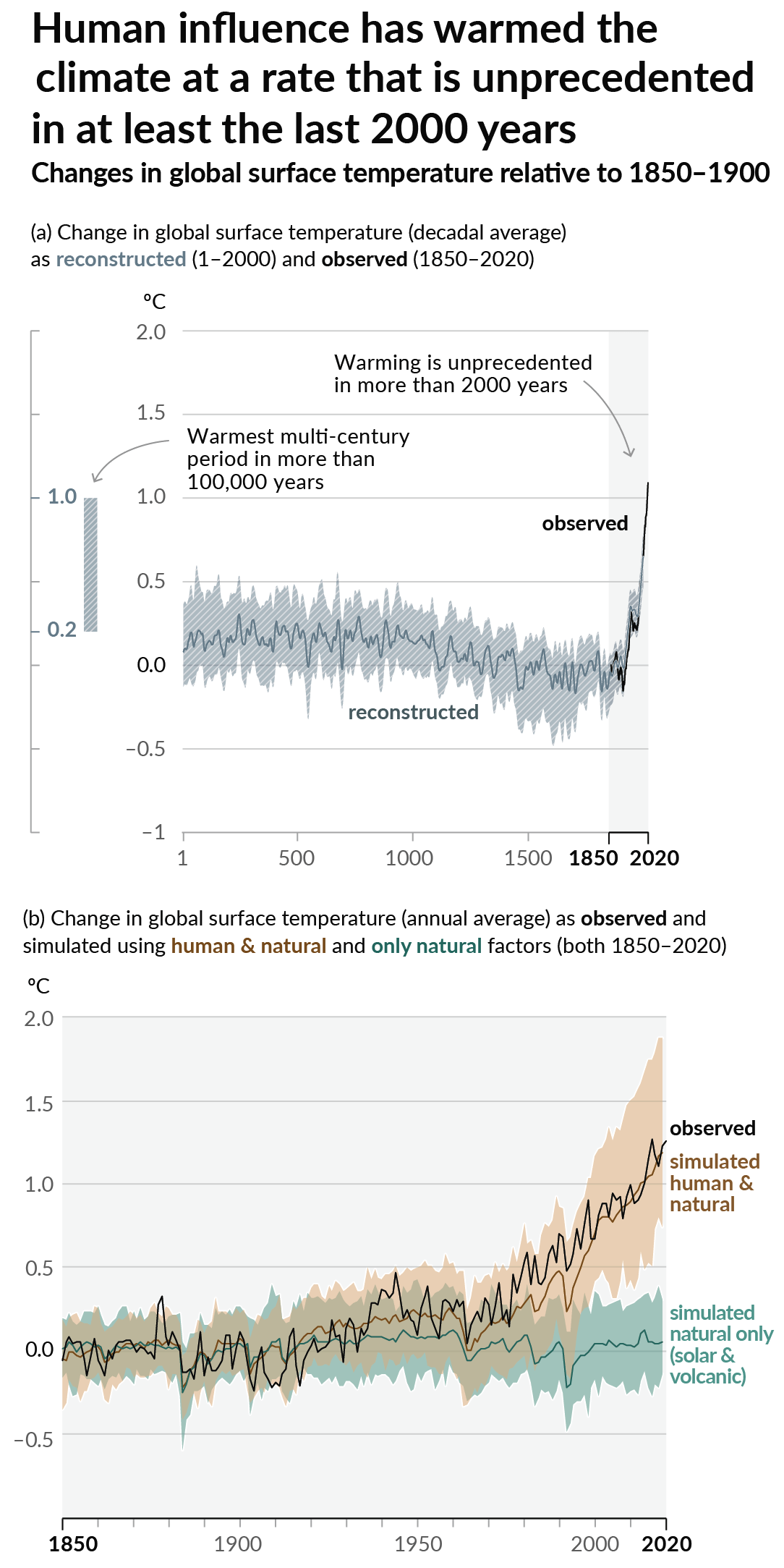

As a starting point, the burning of fossil fuels, and to a lesser extent deforestation and release of methane are responsible for the warming in recent decades:

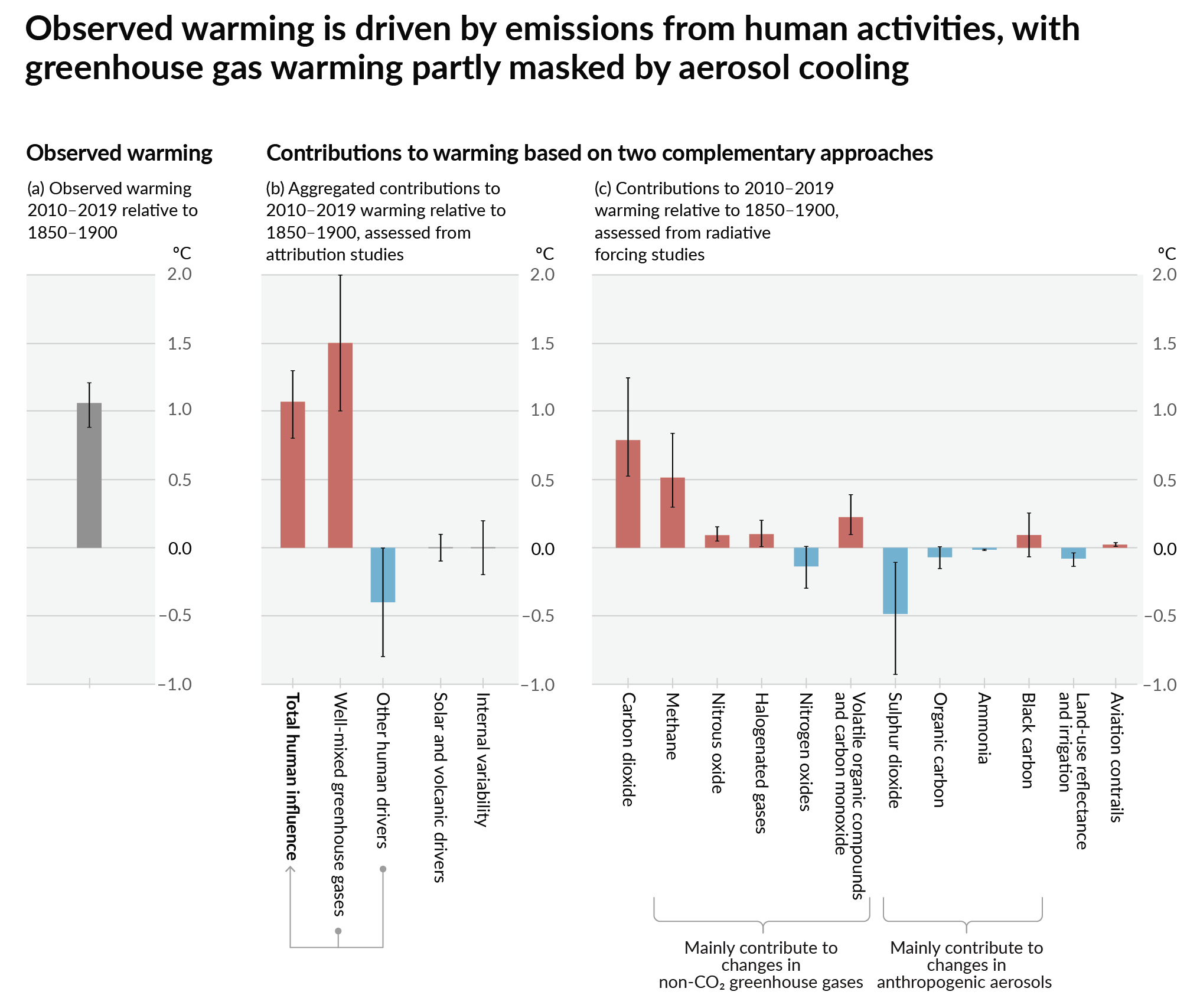

How much each change to the atmosphere has warmed the world:

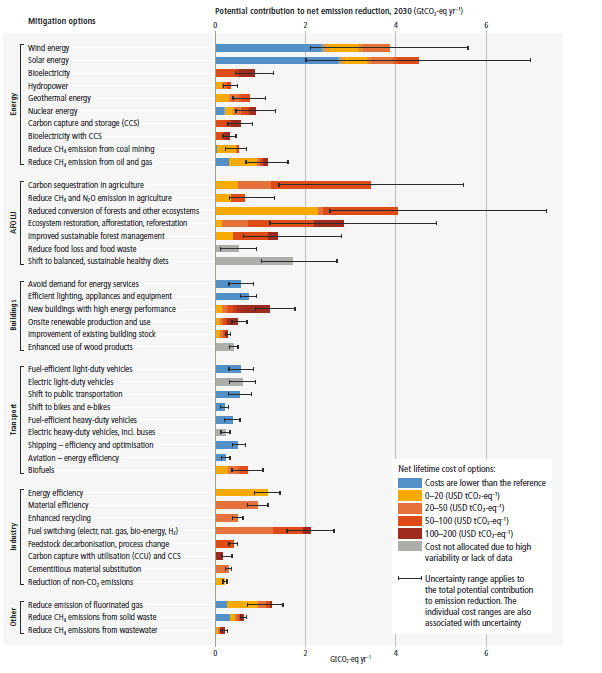

Recommended actions to cut greenhouse gas emissions in the near future:

Anti-science, inactivism, and unsupported conspiracy theories are not ok here.

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

So I did a little math.

This site says a single ChatGPT query consumes 0.00396 KWh.

Assume an average LED light bulb is 10 watts, or 0.01 kwh/hr. So if I did the math right, no guarantees there, a single ChatGPT query is roughly equivalent to leaving a light bulb on for 20 minutes.

So if you assume the average light bulb in your house is on a little more than 3 hours a day, if you make 10 ChatGPT queries per day it's the equivalent of adding a new light bulb to your house.

Which is definitely not nothing. But isn't the end of the world either.

It’s also the required energy to train the model. Inference is usually more efficient (sometimes not but almost always significantly more so), because you have no error back propagation or other training specific calculations.

Models probably take 1000 megawatts of energy to train (GPT3 took 284MW by OpenAI’s calculation). That’s not including the web scraping and data cleaning and other associated costs (such as cooling the server farms which is non trivial).

A coal plant takes roughly 364kg - 500kg of coal to generate 1 MWh. So for GPT3 you’d be looking at 103,376 kg (~230 thousand pounds, or 115 US tons) at minimum to train it. Nobody has used it and we’re not looking at the other associated energy costs at this point. For comparison, a typical home may use 6MWh per year. So just training GPT3 could’ve powered 47 homes for an entire year.

Edit: also, it’s not nearly as bad as crypto mining. And as another person says it’s totally moot if we have clean sources of energy to fill the need and the grid can handle it. Unfortunately we have neither right now.

If you amortize training costs over all inference uses, I don't think 1000MW is too crazy. For a model like GPT3 there's likely millions of inference calls to split that cost between.

Sure, and I think that these may even be useful and it warrants the cost. But it is to just say that this still isn’t simply running a couple light bulbs or something. This is a major draw on the grid (but likely still pales in comparison to crypto farms).

Note that most people would be better off using a model that’s trained for a specific task. For example, training image recognition uses vastly less energy because the models are vastly smaller, but they’re exceedingly excellent at image recognition.

The article claims 200M ChatGPT requests per day. Assuming they make a new version yearly, that's 73B requests per training. Spreading 1000MW across 73B requests yields a per-request amortized cost of 0.01 watt. It's nothing.

47 more households-worth of electricity just isn't a major draw on anything. We add ~500,000 households a year from natural growth.

I have a feeling it’s not going to be the ordinary individual user that’s going to drive the usage to problematic levels.

If a company can make money off of it, consuming a ridiculous amount of energy to do it is just another cost on the P & L.

(Assuming of course that the company using it either pays the electric bill, or pays a marked-up fee to some AI/cloud provider)